Ghosts From the Darkness

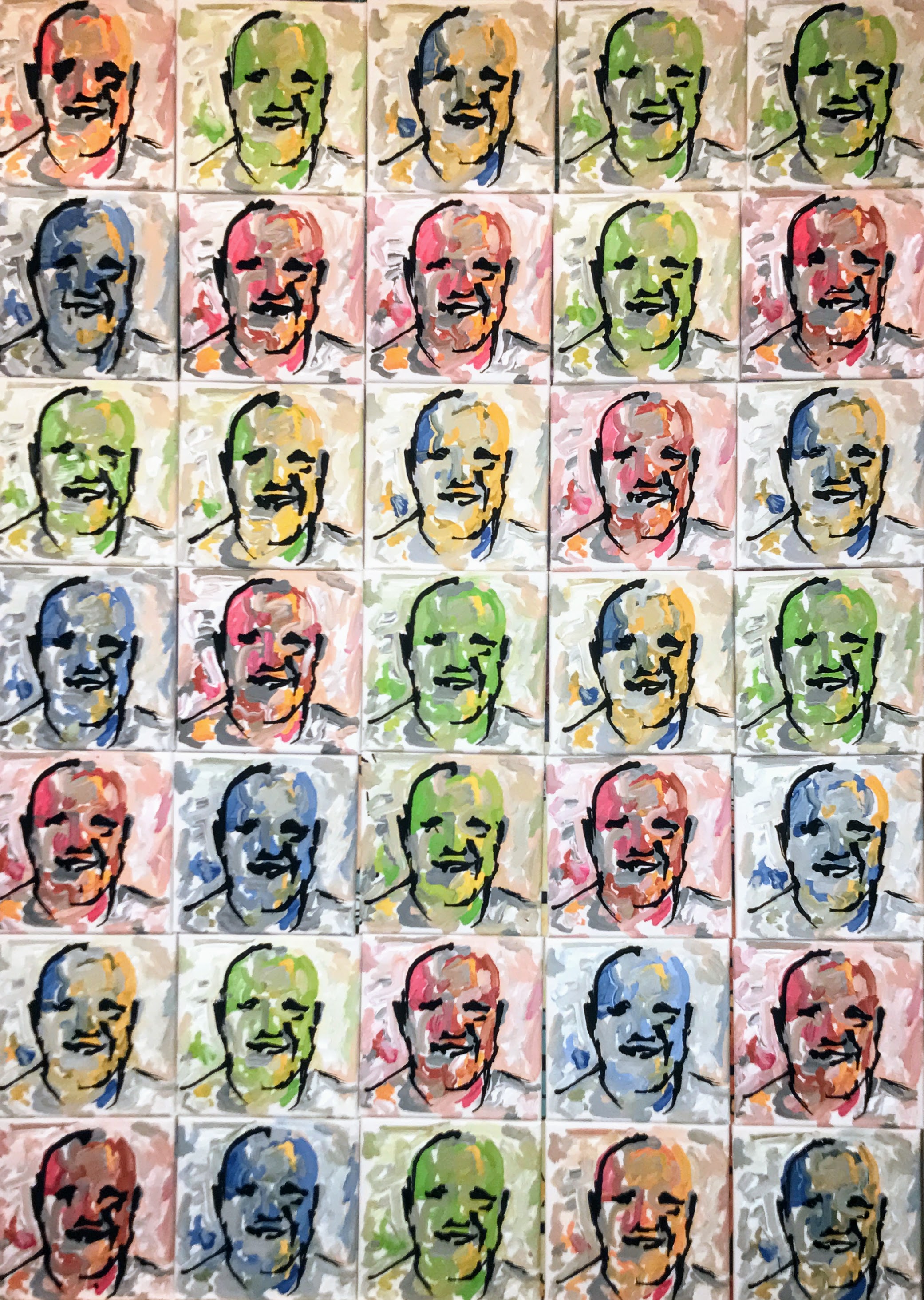

Been painting dozens and dozens of portraits of faces imagined by a GAN I implemented. These are haunting. I am fascinated as the faces begin emerging from the algorithm and try to capture them right as they begin to take shape and become recognizable as faces. Here are two recent paintings...

Can robots be creative? They Probably Already Are...

In this video I demonstrate many of the algorithms and approaches I have programmed into my painting robots in an attempt to give them creative autonomy. I hope to demonstrate that it is no longer a question of whether machines can be creative, but only a debate of whether their creations can be considered art.

So can robot's make art?

Probably not.

Can robots be creative?

Probably, and in the artistic discipline of portraiture, they are already very close to human parity.

Pindar Van Arman

Are My Robots Finally Creative?

After twelve years of trying to teach my robots to be more and more creative, I think I have reached a milestone. While I remain the artist of course, my robots no longer need any input from me to create unique original portraits.

I will be releasing a short video with details shortly, but as can be seen in the slide above from a recent presentation, my robots can "imagine" faces with GANs, "imagine" a style with CANs, then paint the imagined face in the imagined style using CNNs. All the while evaluating its own work and progress with Feedback Loops. Furthermore, the Feedback Loops can use more CNNs to understand context from its historic database as well as find images in its own work and adjust painting both on a micro and macro level.

This process is so similar to how I paint portraiture, that I am beginning to question if there is any real difference between human and computational creativity. Is it art? No. But it is creative.

HBO Vice Piece on CloudPainter - The da Vinci Coder

Typically the pun applied to artistic robots make me cringe, but I actually liked HBO Vice's name for their segment on CloudPainter. they called me The Da Vinci Coder.

Spent the day with them couple of weeks ago and really enjoyed their treatment of what I am trying to do with my art. Not sure how you can access HBO Vice without HBO, but if you can it is a good description of where the state of the art is with artificial creativity. If you can't, here are some stills from the episode and a brief description...

Hunter and I working on setting up a painting...

One of my robots working on a portrait...

Elle asking some questions...

Cool shot of my paint covered hands...

One of my robots working on a portrait of Elle...

... and me walking Elle through some of the many algorithms, both borrowed and invented, that I use to get from a photograph of her to a finished stylized portrait below.

Robot Art 2017 - Top Technical Contributor

CloudPainter used deep learning, various open source AI, and some of our own custom algorithms to create 12 paintings for the 2017 Robot Art Contest. The robot and its software was awarded the Top Technical Contribution Award while the artwork it produced recieved 3rd place in the aesthetic competition. You can see the other winners and competitors at www.robotart.org.

Below are some of the portraits we submitted.

Portrait of Hank

Portrait of Corinne

Portrait of Hunter

We chose to go an abstract route in this year's competition by concentrating on computational abstraction. But not random abstraction. Each image began with a photoshoot, where CloudPainter's algorithms would then pick a favorite photo, create a balanced composition from it, and use Deep Learning to apply purposeful abstraction. The abstraction was not random but based on an attempt to learn from the abstraction of existing pieces of art whether it was from a famous piece, or from a painting by one of my children.

Full description of all the individual steps can be seen in the following video.

NVIDIA GTC 2017 Features CloudPainter's Deep Learning Portrait Algorithms

CloudPainter was recently featured in NVIDIA's GTC 2017 Keynote. As deep learning finds it way into more and more applications, this video highlight some of the more interesting applications. Our ten seconds comes around 100 seconds in, but I suggest watching the whole thing to see where the current state of the art in artificial intelligence stands.

TensorFlow Dev Summit 2017 cont...

Matt and I had a long day listening to some of the latest breakthroughs in deep learning, specifically those related to TensorFlow. Some standouts included a Stanford student that had created a neural net to detect skin cancer. Also liked Doug Eck's talk about artificial creativity. Jeff Dean had a cool keynote, and got to learn about TensorBoard from Dandelion Mane. One of my favorite parts of the summit was getting shout outs and later talking to both Jeff Dean and Doug Eck. The shoutouts to cloudpainter during Jeff's Keynote and Eck Session and lots of pics can be seen below. This is mostly for my memories.

Inspiration from the National Portrait Gallery

One of the best things about Washington D.C. is its public art museums. There are about a dozen or so world class galleries where you are allowed to take photos and use the work in your own art, because after all, we the people own the paintings. Excited by the possibilities of deep learning and how well style transfer was working, the kids and I went to the National Portrait Gallery. for some inspiration.

One of the first things that occurred to us was a little inception like. What would happen if we applied style transfer to a portrait using itself as the source image. It didn't turn out that well, but here are a couple of those anyways.

While this was idea of a dead end, the next idea that came to us was a little more promising. Looking at the successes and failures of the style transfers we had already performed, we started noticing that when the context and composition of the paintings matched, the algorithm was a lot more successful artistically. This is of course obvious in hindsight, but we are still working to understand what is happening in the deep neural networks, and anything that can reveal anythign about that is interesting to us.

So the idea we had, which was fun to test out, was to try and apply the style of a painting to a photo that matched the painting's composition. We selected two famous paintings from the National Portrait Gallery to attempt this, de Kooning's JFK and Degas's Portrait of Miss Cassatt. We used JFK on a photo of Dante with a tie on. We also had my mother pose best she can to resemble how Cassatt was seated in her portrait. We then let the deep neaural net do its work. The following are the results. Photo's courtesy of the National Portrait Gallery.

Farideh likes how her portrait came out, as do we, but its interesting that this only goes to further demonstrate that there is so much more to a painting than just its style, texture, and color. So what did we learn. Well we knew it already but we need to figure out how to deal with texture and context better.

Applying Style Transfer to Portraits

Hunter and I have been focusing on reverse engineering the three most famous paintings according to Google as well as a hand selected piece from the National Gallery. These art works are The Mona Lisa, The Starry Night, The Scream, and Woman With A Parasol.

We also just recently got Style Transfer working on our own Tensor Flow system. So naturally we decided to take a moment to see how a neural net would paint using the four paintings we selected plus a second work by Van Gogh, his Self-Portrait (1889).

Below is a grid of the results. Across the top are the images from which style was transferred, and down the side are the images the styles were applied to. (Once again a special thanks to deepdreamgenerator.com for letting us borrow some of their processing power to get all these done.)

It is interesting to see where the algorithm did well and where it did little more than transfer the color and texture. A good example of where it did well can be seen in the last column. Notice how the composition of the source style and the portrait it is being applied to line up almost perfectly. Well as could be expected, this resulted in a good transfer of style.

As far as failure. it is easy to notice lots of limitations. Foremost, I noticed that the photo being transferred needs to be high quality for the transfer to work well. Another problem is that the algorithm has no idea what it is doing with regards to composition. For example, in The Scream style transfers, it paints a sunset across just about everyone's forehead.

We are still in processing of creating a step by step animation that will show one of the portraits having the style applied to it. It will be a little while thought cause I am running it on a computer that can only generate one frame every 30 minutes. This is super processor intensive stuff.

While processor is working on that we are going to go and see if we can't find a way to improve upon this algorithm.

Channeling Picasso with Style Transfer and Google's TensorFlow

We are always jumping back and forth between hardware and software upgrades to our painting robot. This week it's the software. Pleased to report that we now have our own implemention of Dumoulin, Shlens, and Kudlar's Style Transfer. This of course is the Deep Learning algorithm that allows you to recreate content in the style of a source painting.

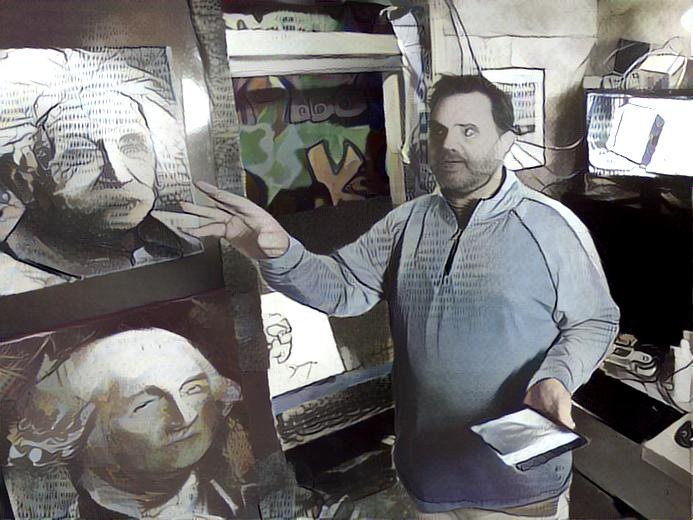

The first image that we successfully created was made by transferring the style of Picasso's Guernica into a portrait of me in my studio.

So here are the two images we started with.

And the following is the image that the neural networks came up with.

I was able to get this neural net working thanks in large part to the step-by-step tutorial in this amazing blog post by LO at http://www.chioka.in/tensorflow-implementation-neural-algorithm-of-artistic-style. Cool thing about the Deep Learning community, is that I found half a dozen good tutorials. So if this one doesn't work out for you, just search out another.

Even cooler though, is that you don't even need to set up your own implementation. If you want to do your own Style Transfers, all you have to do is head on over to the Deep Dream Generator at deepdreamgenerator.com. On this site you can upload pictures and have their implementation generate your own custom Style Transfers. There is even a way to upload your own source images and play with the settings.

Below is a grid of images I created on the Deep Dream Generator site using the same content and source image that I used in my own implementation. In them, I played around with the Style Scale and Style Weight settings. Top row has Scale set to 1.6, while second row is 1, and third is 0.4. First column has the Weight set to 1, while second is at 5 and third is at 10.

So while I suggest you go through the pains of setting up your own implementation of Style Transfer, you don't even have to. Deep Dream Generator lets you perform 10 style transfers an hour.

For us on the other hand, we need our own generator as this technology will be closely tied into all robot paintings going forward.

Capturing Monet's Style with a Robot

As we gather data in an attempt to recreate the style and brushstroke of old masters with Deep Learning, we thought we would show you one of the ways we are collecting data. And it is pretty simple actually. We are hand painting brushstrokes with a 7BOT robotic arm and recording the kinematics behind the strokes. It is a simple trace and record operation where the robotic arms position is recorded 30 times a second and saved to a file.

As can be seen in the picture above, all Hunter had to do was trace the brush strokes he saw in the painting. He did this for a number of colors and when he was done, we were able to play the strokes back to see how well the robot understood our instructions. As can be seen in the following video, the playback was a disaster. But that doesn't matter to us that much. We are not interested in the particular strokes as much as we are in analyzing them for use in the Deep Learning algorithm we are working on.

Woman With A Parasol is the fourth Masterpiece we have begun collecting data for. As this is an open source project, we will be making all the data we collect public. For example, if you have a 7Bot, or similar robotic arm with 7 actuators, here are the files that we used to record the strokes and make the horrible reproduction.

Selecting Masterpieces to Recreate with Our Robot

Spent afternoon with Hunter exploring the National Gallery of Art to decide on the next masterpiece we are going to recreate and analyze with our robot. Saw the da Vinci, many Van Gogh's, and lots of other paintings before being drawn to Monet. And as we looked around at several Monets, it became obvious that he had a special artistic style that would lend itself well to replication by our robot and Deep Learning algorithms. In the end Hunter and I decided on the painting the artwork on the left below - Monet's "Woman with a Parasol."

Now if we could somehow program our robots to capture and paint the wind across her face like Monet did. Wow, that would be amazing.

Stroke Maps, TensorFlow, and Deep Learning

Just completed recreations of The Scream and The Mona Lisa. These are not meant to be accurate reproductions of the paintings, but an attempt at recreating how the artists painted each stroke. The Idea being that once these strokes are mapped, TensorFlow and Deep Learning can use the data to make better pastiches.

Work Continues Mapping the Brushstrokes of Famous Masterpieces

Once I created a brushstroke map of Edvard Munch's The Scream, I thought it would be cool to have brush stroke mappings for more iconic artworks. So I googled "famous paintings" and was presented with a rather long list. Interestingly The Scream was in the top three along with da Vinci's Mona Lisa and Van Gogh's Starry Night. Well, why not do the top three. So work has begun an creating a stroke map for The Mona Lisa. In the following image, the AI has taken care of laying down an underpainting, or what would have been called a cartoon in da Vinci's time.

I am now going into it by hand and finger-swiping my best guess as to how da Vinci would have applied his brushstrokes. Will post the final results as well as provide access to the Elasticsearch database with all the strokes as soon as it is finished. My hope is that the creation of the brushstroke mappings can be used to better understand these artists, and how artists create art in general.

A Deeper Learning Analysis of The Scream

Am a big fan of what The Google Brain Team, specifically scientists Vincent Dumoulin, Jonathon Shlens, and Majunath Kudlar, have accomplished with Style Transfer. In short they have developed a way to take any photo and paint it in the style of a famous painting. The results are remarkable as can be seen in the following grid of original photos painted in the style of historical masterpieces.

However, as can be seen in the following pastiches of Munch's The Scream, there are a couple of systematic failures with the approach. The Deep Learning algorithm struggles to capture the flow of the brushstrokes or "match a human level understanding of painting abstraction." Notice how the only thing truly transferred is color and texture.

Seeing this limitation, I am currently attempting to improve upon Google's work by modeling both the brushstrokes and abstraction. In the same way that the color and texture is being successfully transferred, I want the actual brushstrokes and abstractions to resemble the original artwork.

So how would this be possible? While I am not sure how to achieve artistic abstraction, modeling the brushstrokes is definitely doable. So lets start there.

To model brushstrokes, Deep Learning would need brushstroke data, lots of brushstroke data. Simply put, Deep Learning needs accurate data to work. In the case of the Google's successful pastiches (an image made in style of an artwork), the data was found in the image of the masterpieces themselves. Deep Neural Nets would examine and re-examine the famous paintings on a micro and macro level to build a model that can be used to convert a provided photo into the painting's style. As mentioned previously, this works great for color and texture, but fails with the brushstrokes because it doesn't really have any data on how the artist applied the paint. While strokes can be seen on the canvas, there isn't a mapping of brushstrokes that could be studied and understood by the Deep Learning algorithms.

As I pondered this limitation, I realized that I had this exact data, and lots of it. I have been recording detailed brushstroke data for almost a decade. For many of my paintings each and every brushstroke has been recorded in a variety of formats including time-lapse videos, stroke maps, and most importantly, a massive database of the actual geometric paths. And even better, many of the brushstrokes were crowd sourced from internet users around the world - where thousands of people took control of my robots to apply millions of brushstrokes to hundreds of paintings. In short, I have all the data behind each of these strokes, all just waiting to be analyzed and modeled with Deep Learning.

This was when I looked at the systematic failures of pastiches made from Edvard Munch's The Scream's, and realized that I could capture Munch's brushstrokes and as a result make a better pastiche. The approach to achieve this is pretty straight forward, though labor intensive.

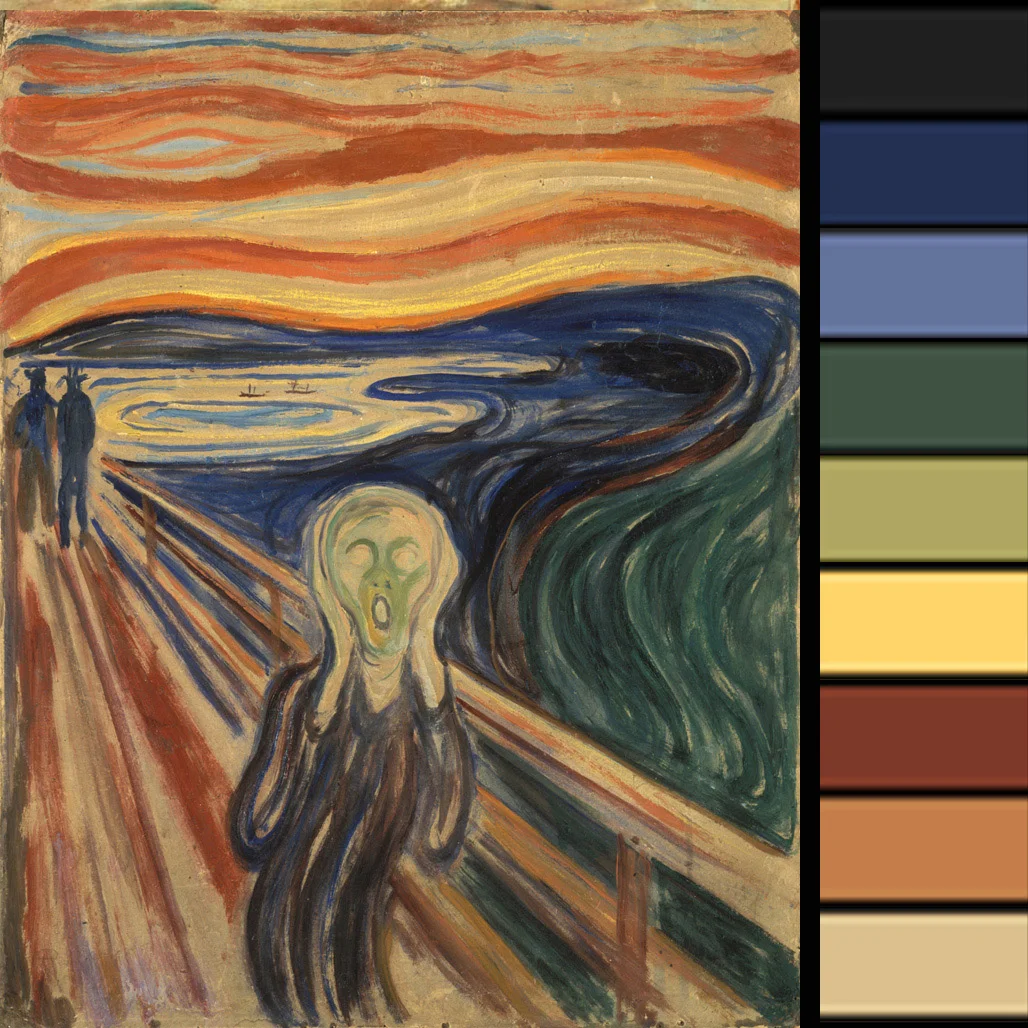

This process all begins with the image and a palette. I have no idea what Munch's original palette was, but the following is an approximate representation made by running his painting through k-means clustering and some of my own deduction.

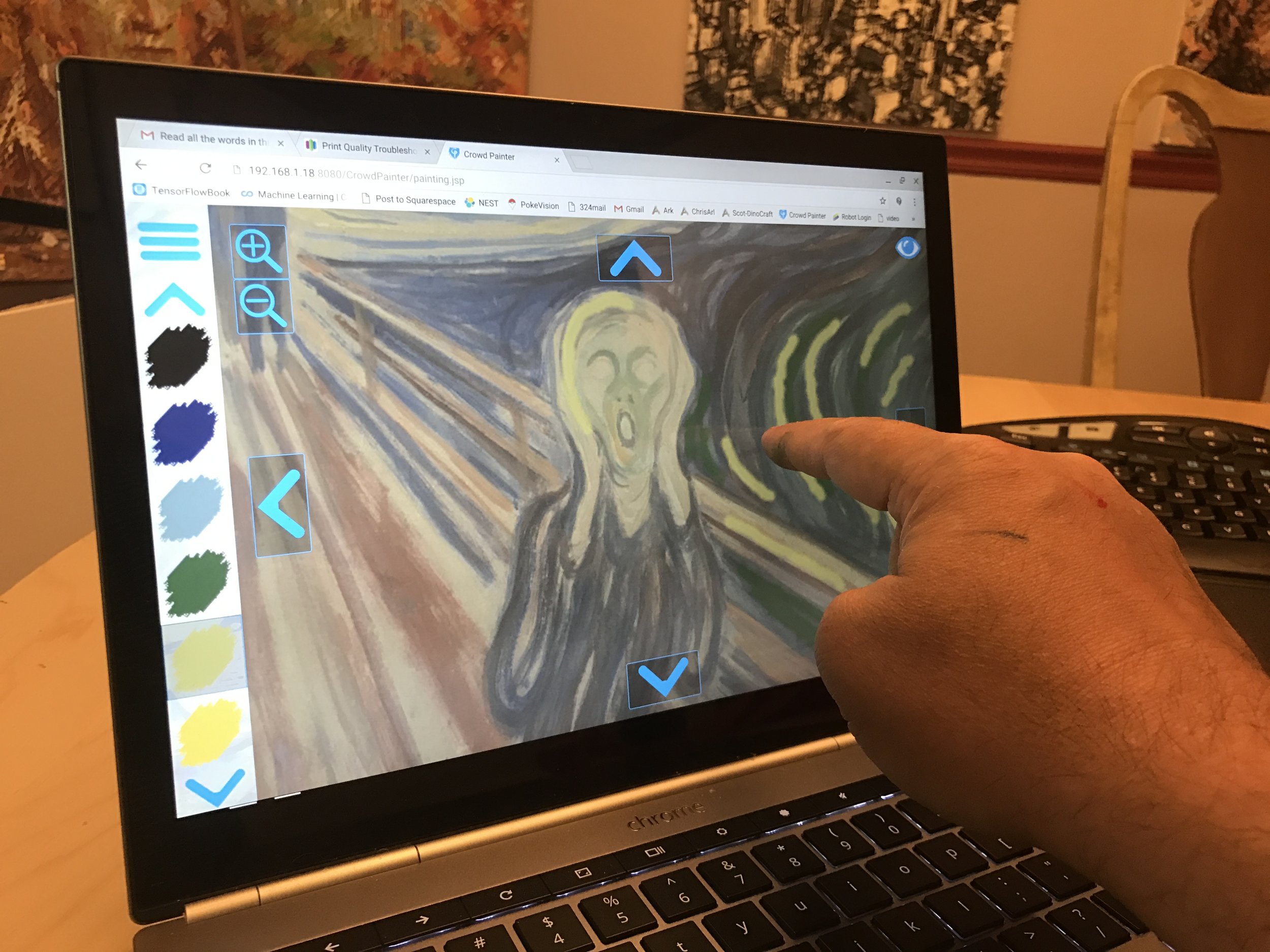

With the painting and palette in hand, I then set cloudpainter up to paint in manual mode. To paint a replica, all I did was trace brushstrokes over the image on a touch screen display. The challenging part is painting the brushstrokes in the manner and order that I think Edvard Munch may have done them. It is sort of an historical reenactment.

As I paint with my finger, these strokes are executed by the robot.

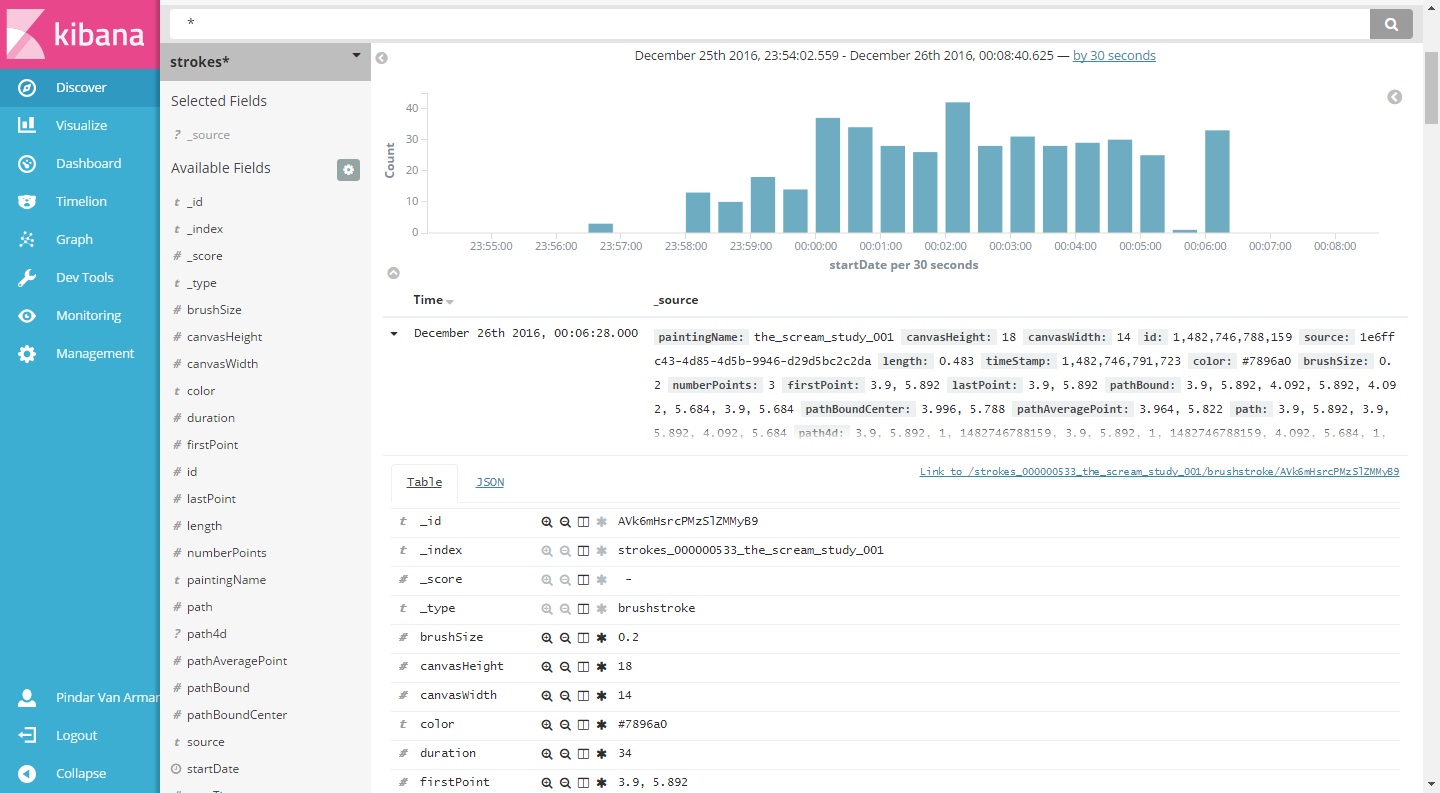

More importantly, each brushstroke is saved in an Elasticsearch database with detailed information on its exact geometry and color.

At the conclusion of this replica, detailed data exists for each and every brushstroke to the thousandth of an inch. This data can then be used as the basis for an even deeper Deep Learning analysis of Edvard Munch's The Scream. An analysis beyond color and texture, where his actual brushstrokes are modeled and understood.

So this brings us to whether or not abstraction can be captured. And while I am not sure that it can, I think I have an approach that will work at least some of the time. To this end, I will be adding a second set of data that labels the context of The Scream. This will include geometric bounds around the various areas of the painting and be used to consider the subject matter in the image. So while The Google Brain Team used only an image of the painting for its pastiches, the process that I am trying to perfect will consider the the original artwork, the brushstrokes, and how brushstroke was applied to different parts of painting.

Ultimately it is believed that by considering all three of these data points, a pastiche made from The Scream will more accurately replicate the style of Edvard Munch.

So yes, these are lofty goals and I am getting ahead of myself. First I need to collect as much brushstroke data as possible and I leave you now to return to that pursuit.